Solana trading infrastructure 2026: MEV, nodes, latency

Introduction: why Solana trading infrastructure matters in 2026

In high-frequency trading, the difference between profit and loss is rarely the strategy alone. It is the execution stack the strategy runs on. In 2026, Solana trading infrastructure is increasingly shaped by faster client implementations, lower-latency data propagation, and the path toward much faster finality. That shifts competitive advantage away from generic RPC access and toward the layers below it: packet handling, stake-weighted quality of service, real-time data delivery, and how close your infrastructure is to the next scheduled leader.

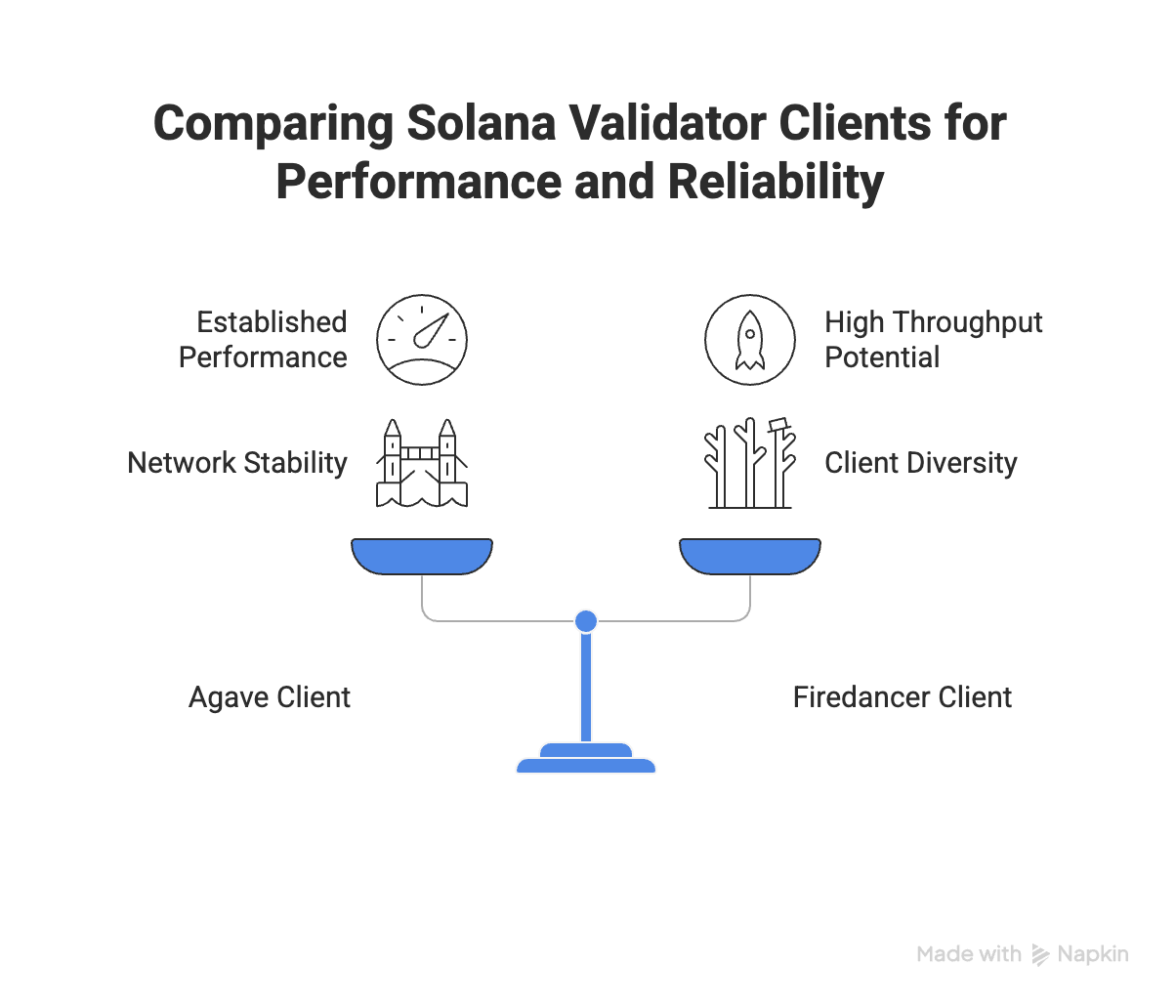

Validator clients in 2026: Agave vs Firedancer

For most of Solana’s history, the network ran on a single validator client written in Rust. That changed with the arrival of Firedancer, Jump Trading’s ground-up C++ reimplementation. The two clients, Agave (the evolved Rust client) and Firedancer, now run in parallel across the validator set, and the implications go well beyond raw throughput numbers.

Firedancer is built to remove many of the software bottlenecks that constrained earlier Solana clients. Its architecture separates networking, transaction processing, and block propagation into highly optimized parallel paths, which is why it is widely viewed as a major performance step for the network. Public demonstrations have shown Firedancer exceeding 1M TPS in testing, which is best understood as a signal of where validator performance is heading rather than the throughput every production validator delivers today.

More importantly for anyone running production infrastructure, client diversity means a bug or edge case that takes down one client won’t halt the network. Validators running Agave keep producing blocks while Firedancer is patched, and vice versa. For institutional liquidity providers where uptime is a hard requirement, this is a structural improvement to the network’s reliability guarantees.

The hardware requirements that validators operate under, NVMe storage, 10Gbps+ networking, substantial RAM, flow directly into the quality of the nodes traders connect to. A validator running on underpowered hardware struggles to keep up with block production, and that lag shows up as slot lag on your end. The specs aren’t abstract infrastructure trivia. They set the floor for what reliable connectivity actually looks like in practice.

Breaking the speed barrier: Alpenglow & sub-150ms finality

Alpenglow is the consensus upgrade that fundamentally changed what Solana feels like to build on. Previous consensus mechanisms required multiple rounds of vote propagation before a slot could be considered final. Validators would cast votes, wait for those votes to propagate across the network, then wait for confirmation that a supermajority had been reached. Alpenglow collapses that process by optimizing how votes are aggregated and processed, reducing time-to-confidence to under 150ms end-to-end.

The practical effect is significant. On-chain central limit order books can now compete meaningfully with centralized exchanges on latency. Liquidation engines and arbitrage strategies that previously required probabilistic assumptions about finality can now operate with near-certainty before acting on the result.

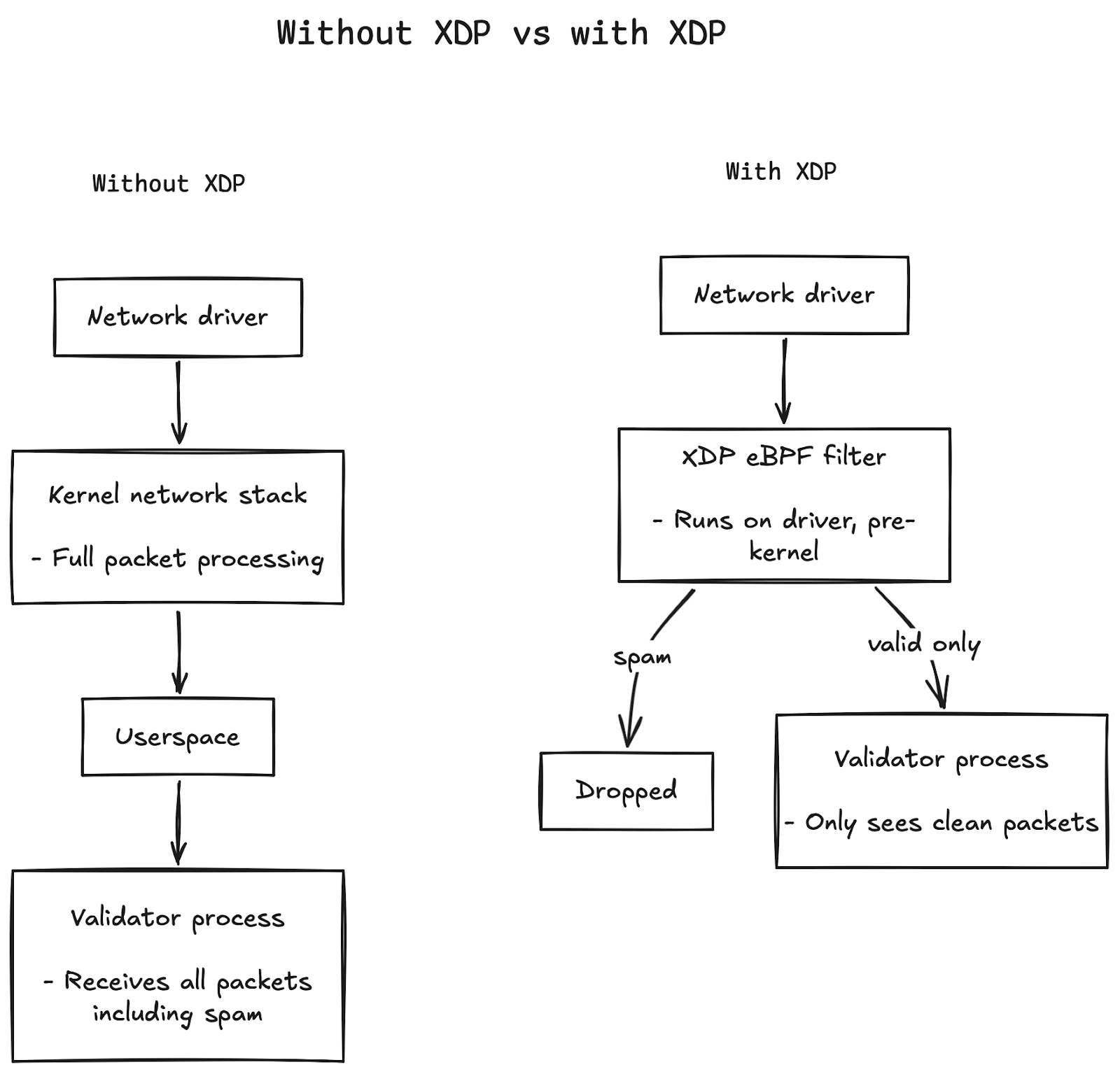

Underneath the consensus layer, XDP (eXpress Data Path) has become a standard tool in the competitive validator stack. Most networking code runs in userspace, which means packets travel through the kernel’s full network stack before your application ever sees them. XDP short-circuits that.

It runs eBPF programs directly on the network driver, so packets can be inspected and dropped before they go anywhere near the validator process. Under spam conditions, this matters a lot. The validator isn’t wasting cycles rejecting garbage. It never receives it in the first place.

Solana MEV in 2026: Jito, PBS, and execution control

Maximal extractable value (MEV) on Solana has matured considerably. The early landscape was largely about raw speed. Whoever got their transaction to the scheduled leader first won. That dynamic still exists, but the ecosystem has developed more sophisticated mechanisms layered on top of it.

Jito’s block engine introduced a more structured marketplace for block space, allowing searchers to submit bundles with attached SOL tips to validators. That moved Solana MEV beyond a pure speed race and toward a more explicit market for transaction ordering. In 2026, the better framing is not Ethereum-style PBS alone, but Solana’s own evolving execution stack: Jito’s block engine, emerging blockspace auction mechanisms (BAM), and application-controlled execution. Together, these systems aim to make block building more programmable, more transparent, and less dependent on brute-force network proximity alone.

Application-controlled execution (ACE) addresses the user-protection side of the equation. Rather than leaving users exposed to sandwich attacks, ACE lets dApps define execution constraints at the application level, controlling transaction ordering, slippage bounds, and which actors can interact with specific instruction sequences. Healthy arbitrage still flows through. Predatory MEV that harms end users is structurally harder to execute.

Real-time data pipelines: ShredStream and Yellowstone gRPC

For bot operators and searchers, three components matter most in the low-latency Solana data and execution path: ShredStream, Yellowstone gRPC, and Warp Transactions. They are related, but they solve different problems.

| Component | Primary role | Improves | Does not directly improve | Best for |

|---|---|---|---|---|

| ShredStream | Low-latency block data propagation | How quickly your infrastructure sees new block data as shreds are produced | Transaction submission or landing on its own | Searchers, market makers, latency-sensitive monitoring |

| Yellowstone gRPC | Push-based chain data streaming | Real-time delivery of transactions, account updates, slots, and blocks without polling | Transaction routing to the leader | Bots, indexers, event-driven trading systems |

| Warp Transactions | Optimized transaction delivery path | How quickly sendTransaction reaches the current leader | Read latency, block visibility, or account streaming | Trading bots, liquidation systems, multi-step DeFi execution |

| Standard RPC polling | General-purpose node access | Basic read access and compatibility | Low-latency streaming or optimized send path | Wallets, dashboards, non-latency-sensitive apps |

- ShredStream gives earlier access to block data than RPC polling by streaming shreds between validators.

- Yellowstone gRPC delivers real-time chain data without polling, directly from validator memory.

- Warp Transactions optimize the send path by routing transactions more directly to the leader.

🤔 In practical terms: ShredStream helps you see new information sooner, Yellowstone gRPC helps you process that information more efficiently, and Warp helps you act on it faster.

Chainstack trading stack: Trader Nodes and Warp Transactions

Solana Trader Nodes

Trader Nodes are regionally deployed endpoints tightly bound to a specific location and fine-tuned for low-latency, high-availability trading workloads. The regional deployment matters because Solana’s leader schedule is deterministic. Chainstack places nodes close to where block production is concentrated, so your RPC reads and transaction sends don’t travel further than they need to.

Trader Nodes ship with ShredStream enabled by default, reducing block data propagation delay without requiring additional setup. In practice, the exact latency improvement depends on region, network conditions, and how close your Solana trading infrastructure is to the current or upcoming leader . Yellowstone gRPC is available as an add-on, replacing polling with push-based streaming for transaction and account monitoring. Here’s what a minimal subscription looks like against a Chainstack node endpoint:

import grpc

from generated import geyser_pb2, geyser_pb2_grpc

# Proto files are generated by running grpc_tools.protoc against

# https://github.com/rpcpool/yellowstone-grpc/tree/master/yellowstone-grpc-proto/proto

# Establish authenticated channel to your Chainstack node

channel = grpc.secure_channel(

"your-trader-node.chainstack.com:1443",

grpc.ssl_channel_credentials()

)

stub = geyser_pb2_grpc.GeyserStub(channel)

# Subscribe to transactions touching a specific program

request = geyser_pb2.SubscribeRequest(

transactions={

"my_filter": geyser_pb2.SubscribeRequestFilterTransactions(

account_include=["YOUR_PROGRAM_ID"]

)

}

)

# Push-based -- your handler fires on every matching transaction

for update in stub.Subscribe(request):

process_transaction(update)Built-in geo-redundancy and automatic failover keep bots operational during network spikes rather than dropping connections at exactly the wrong moment. Archive access back to Solana genesis is also included, meaning backtesting runs against the same infrastructure as your live trading setup rather than a separate, potentially inconsistent environment.

Warp Transactions

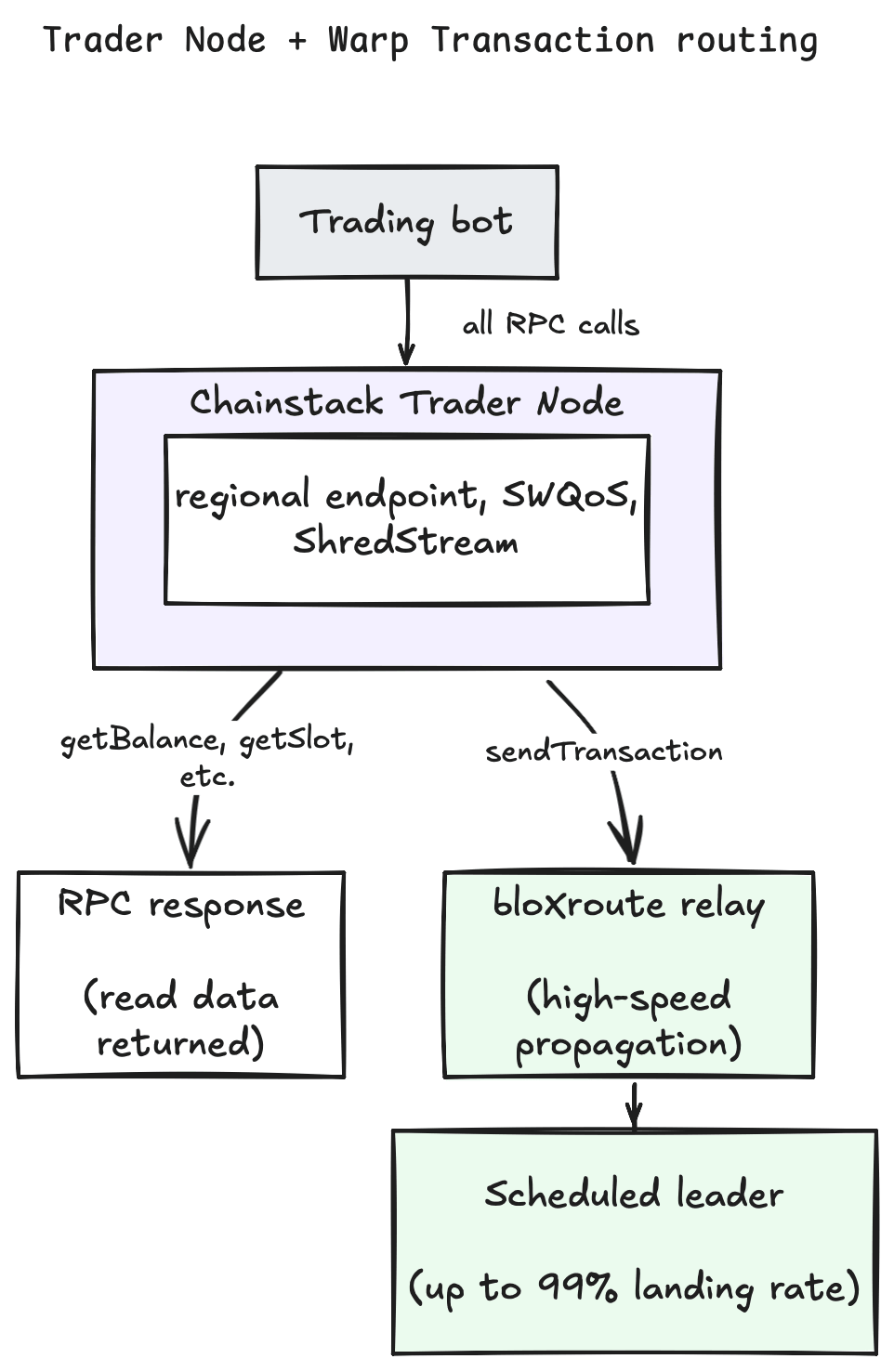

Warp Transactions handles the send side of the equation. The standard sendTransaction path gossip your transaction across the network toward the scheduled leader. Warp bypasses that entirely, routing sends directly through bloXroute’s relay network to the current leader.

The implementation requires nothing from you beyond switching your RPC endpoint. No changes to transaction construction, no tip instructions, no memo fields. Every call routes normally through the Trader Node except sendTransaction, which bloXroute picks up and delivers directly.

The practical result is landing rates of up to 99%, which is most meaningful for multi-program DeFi sequences where a missed transaction doesn’t just mean a lost opportunity but a broken execution chain.

from solana.rpc.async_api import AsyncClient

# Before: standard public RPC, gossip propagation

client = AsyncClient("https://api.mainnet-beta.solana.com")

# After: Trader Node with Warp enabled

# All calls route normally except sendTransaction,

# which goes directly to the current leader via bloXroute

client = AsyncClient("https://your-trader-node.chainstack.com")

# Your existing transaction logic is completely unchanged

result = await client.send_transaction(tx, signer)Reads go through a node that’s already positioned close to the leader. Sends bypass gossip and arrive directly. The two halves of the execution problem get solved at the same endpoint, without stitching together multiple providers or maintaining relay infrastructure on top of your trading logic.

📖 Learn more about Solana Trader Node and Warp transactions in Chainstack documentation.

How to build a production trading stack on Solana

The simplest way to frame a production setup is three components working together. A regional Trader Node handling your RPC reads, Warp Transactions handling your sends, and your bot co-located in the same region. That combination gets you production-grade execution without writing or maintaining custom propagation infrastructure.

What’s worth measuring once you’re live is slightly different from what most people track. Raw RPC response time is the obvious metric but it’s not the one that tells you whether your stack is actually performing. The numbers that matter are landing rate, where anything below 95% should prompt investigation, slot lag between your node and the tip of the chain, and time-to-leader for your sendTransaction calls. Those three together give you a real picture of execution quality rather than just connection speed.

Conclusion: Solana is the decentralized Nasdaq

Solana in 2026 isn’t just fast. It’s structurally different from what it was even two years ago. Localized fee markets mean a high-activity event on one program no longer ripples out and spikes costs on your core trading pairs. The infrastructure layer has matured to the point where professional-grade execution is accessible without building and maintaining custom relay infrastructure from scratch.

For most teams, the lowest-friction path to that execution environment is pairing Chainstack Trader Nodes with Warp Transactions. You get regional proximity to scheduled leaders, push-based streaming via ShredStream and Yellowstone gRPC, and direct-to-leader transaction delivery through bloXroute, all through a single endpoint with no custom setup required.

The full stack looks like this. Firedancer or Agave at the validator layer, Alpenglow bringing finality under 150ms, Jito and PBS creating a transparent and structured block space market, a Chainstack Trader Node handling reads and streaming, and Warp Transaction delivery ensuring your sends actually land. Each component addresses a specific weakness in the naive setup. Together, they define what production-grade Solana trading infrastructure looks like in 2026.

Reliable Solana RPC node infrastructure

Getting started with Solana on Chainstack is fast and straightforward. Developers can deploy a reliable Solana node within seconds through an intuitive Console — no complex setup or hardware management required.

Chainstack provides low-latency Solana RPC access and real-time gRPC data streaming via Yellowstone Geyser Plugin, ensuring seamless connectivity for building, testing, and scaling DeFi, analytics, and trading applications. With Solana low-latency endpoints powered by global infrastructure, you can achieve lightning-fast response times and consistent performance across regions.

Start for free, connect your app to a reliable Solana RPC endpoint, and experience how easy it is to build and scale on Solana with Chainstack – one of the best RPC providers.

FAQ

Trader Nodes are low-latency Solana endpoints optimized for trading workloads, typically with regional placement, fast data propagation, and better transaction delivery paths.

ShredStream improves how quickly block data reaches your node, while Yellowstone gRPC streams transactions, slots, and account updates directly from validator memory in a push-based format.

Not directly. ShredStream improves data freshness. Transaction landing depends more on the send path, relay path, SWQoS access, priority fees, and leader proximity.

Warp changes the sendTransaction path by routing transactions more directly toward the leader, reducing dependence on standard gossip propagation.

No. Firedancer is an important client-diversity milestone and performance path, but Agave remains a major validator client in production.

Learn more about Solana from our articles

- x402 on Solana: Developer Guide to Micro-Payments

- Solana Node Types: Validator, RPC, and Trader nodes

- Best Solana RPC providers for fast and reliable production in 2026

- How to get a Solana RPC endpoint in 2026

- How to build a Solana trading bot

- How to improve Solana RPC latency with ShredStream

- Real-time Solana data: WebSocket subscriptions vs Yellowstone gRPC Geyser

Ethereum

Ethereum Solana

Solana Hyperliquid

Hyperliquid Base

Base BNB Smart Chain

BNB Smart Chain MegaETH

MegaETH Aptos

Aptos TRON

TRON Ronin

Ronin zkSync Era

zkSync Era Sonic

Sonic Polygon

Polygon Unichain

Unichain Gnosis Chain

Gnosis Chain Sui

Sui Avalanche Subnets

Avalanche Subnets Polygon CDK

Polygon CDK Starknet Appchains

Starknet Appchains zkSync Hyperchains

zkSync Hyperchains